Inverting a 16-element permutation may done like this:

for (int i = 0; i < 16; i++)

inv[perm[i]] = i;

Computing a histogram of 16 nibbles may done like this:

for (int i = 0; i < 16; i++)

hist[data[i]] += 1;

These different-sounding but already similar-looking tasks have something in common: they can be both be built around a 16x16 bitmatrix transpose. That sounds silly, why would anyone want to first construct a 16x16 bitmatrix, transpose it, and then do yet more processing to turn the resulting bitmatrix back into an array of numbers?

Because it turns out to be an efficiently-implementable operation, on some modern processors anyway.

If you know anything about the off-label application of GF2P8AFFINEQB, you may already suspect that it will be involved somehow (merely left-GF2P8AFFINEQB-ing by the identity matrix already results in some sort of 8x8 transpose, just horizontally mirrored), and it will be, but that's not the whole story.

First I will show not only how to do it with GF2P8AFFINEQB, but also how to find that solution programmatically using a SAT solver. There is nothing that fundamentally prevents a human from finding a solution by hand, but it seems difficult. Using a SAT solver to find a solution ex nihilo (requiring it to find both a sequence of instructions and their operands) is not that easy either (though that technique also exists). Thankfully, Geoff Langdale suggested a promising sequence of instructions:

nibble value, so now we have 16 bitfields with 1 bit set.

— Geoff Langdale (@geofflangdale) January 7, 2023

At this point, what we really want is a 16x16 transpose, not 4 8x8 transposes, but I'm pretty sure we can fake it by using VPERMB to redistribute our bytes (probably first grouping all top 8 bytes into the first 128b ...

The problem we have now (which the SAT solver will solve) is, under the constraint that for all X, f(X) = PERMB(GF2P8AFFINE(B, PERMB(X, A)), C) computes the transpose of X, what is a possible valuation of the variables A, B, C. Note that the variables in the SAT problem correspond to constants in the resulting code, and the variable in the resulting code (X) is quantified out of the problem.

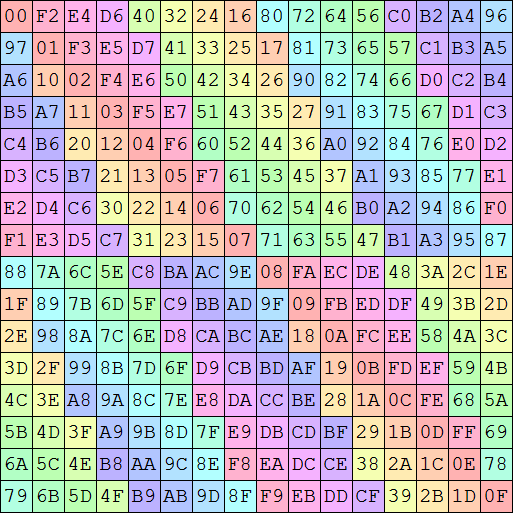

If you know a bit about SAT solving, that "for all X" sounds like trouble, requiring either creating a set of constraints for every possible value of X (henceforth, concrete values of X will be known as "examples"), or some advanced technique such as CEGIS to dynamically discover a smaller set of examples to base the constraints on. Luckily, since we are dealing with a bit-permutation, there are simple and small sets of examples that together sufficiently constrain the problem. For a 16-bit permutation, this set of values could be used:

- 1010101010101010

- 1100110011001100

- 1111000011110000

- 1111111100000000

For a 256-bit permutation, a similar pattern can be used, where each of the examples has 256 bits and there would be 8 of them. Note that if you read the columns of the values, they list out the indices of the corresponding columns, which is no coincidence. Using that set of examples to constrain the problem with, essentially means that we assert that f when applied to the sequence 0..n-1 must result in the desired permutation. The way that I actually implemented this puts a column into one "abstract bit", so that it represents the index of the bit all in one place instead of spread out.

Implementing a "left GF2P8AFFINEQB" (multiplying a constant matrix on the left by a variable matrix on the right) in CNF, operating on "abstract bits" (8 variables each), is relatively straight forward. Every (abstract) bit of the result is the XOR of the AND of some (abstract) bits, writing that down is mostly a chore, but there is one interesting aspect: the XOR can be turned into an OR, since we know that we're multiplying by a permutation matrix. In CNF, OR is simpler than XOR, and easier for the solver to reason through.

VPERMB is more difficult to implement, given that the permutation operand is a variable (if it was a constant, we could just permute the abstract bits without generating any new constraints). To make it easier, I represent the permutation operand as a 32x32 permutation matrix, letting me create a bunch of simple ternary constraints of the form (¬P(i, j) ∨ ¬A(j) ∨ R(i)) ∧ (¬P(i, j) ∨ A(j) ∨ ¬R(i)) (read: if P(i, j), then A(j) must be equal to R(i)). The same thing can be used to implement VPSHUFB, with additional constraints on the permutation matrix (to prevent cross-slice movement).

Running that code, at least on my PC at this time[1], results in (with some whitespace manually added):

__m256i t0 = _mm256_permutexvar_epi8(_mm256_setr_epi8(

14, 12, 10, 8, 6, 4, 2, 0,

30, 28, 26, 24, 22, 20, 18, 16,

15, 13, 11, 9, 7, 5, 3, 1,

31, 29, 27, 25, 23, 21, 19, 17), input);

__m256i t1 = _mm256_gf2p8affine_epi64_epi8(_mm256_set1_epi64x(0x1080084004200201), t0, 0);

__m256i t2 = _mm256_shuffle_epi8(t1, _mm256_setr_epi8(

0, 8, 1, 9, 3, 11, 5, 13,

7, 15, 2, 10, 4, 12, 6, 14,

0, 8, 1, 9, 3, 11, 5, 13,

7, 15, 2, 10, 4, 12, 6, 14));

So that's it. That's the answer[2]. If you want to transpose a 16x16 bitmatrix, on a modern PC (this code requires AVX512_VBMI and AVX512_GFNI[3]), it's fairly easy and cheap, it's just not so easy to find this solution to begin with.

Using this transpose to invert a 16-element permutation is pretty easy, for example using _mm256_sllv_epi16 to construct the matrix and _mm256_popcnt_epi16(_mm256_sub_epi16(t2, _mm256_set1_epi16(1))) (sadly there is no SIMD version of TZCNT .. yet) to convert the bit-masks back into indices. It may be tempting to try to use a mirrored matrix and leading-zero count, which AVX512 does offer, but it only offers the DWORD and QWORD versions VPLZCNTD/Q.

Making a histogram is even simpler, using only _mm256_popcnt_epi16(t2) to convert the matrix into counts.

And for my next trick, I will now not transpose the matrix

What if we didn't transpose that matrix. Does that even make sense? Well, at least for the two applications that I focused on, what we really need is not so much the transpose of the matrix, but any matrix such that:

- Every bit of the original matrix occurs exactly once in the result.

- Each row of the result contains all bits from a particular column.

- The permutation within each row is "regular" enough that we can work with it. We don't need this when making a histogram (as Geoff already noted in one of his tweets).

There is no particular requirement on the order of the rows, any row-permutation we end up with is easy to undo.

The first two constraints leave plenty of options open, but the last constraint is quite vague. Too vague for me to do something such as searching for the best not-quite-transpose, so I don't promise to have found it. But here is a solution: rotate every row by its index, then rotate every column by its index.

At least, that's the starting point. Rotating the columns requires 3 rounds of blending a vector with cross-slice-permuted copy of that vector, and a VPERMQ sandwiched by two VPSHUFBs to rotate the last 8 columns by 8. That's a lot of cross-slice permuting, most of it can be avoided by modifying the overall permutation slightly:

- Exchange the off-diagonal quadrants.

- Rotate each row by its index.

- For each quadrant individually, rotate each column by its index.

Here is some attempt at illustrating that process, feel free to skip past it

These three steps are implementable in AVX2:

- Exchanging the off-diagonal quadrants can be done by gathering the quadrants into QWORDs, permuting them, and shuffling the QWORDs back into quadrants.

- Rotating the rows can be done with VPMULLW (used as a variable shift-left), VPMULHUW (used as a variable shift-right), and VPOR.

- Rotating the columns can be done by conditionally rotating the columns with odd indices by 1, conditionally rotating the columns that have the second bit of their index set by 2, and conditionally rotating the columns that have the third bit of their index set by 4. The rotations can be done using VPALIGNR[4], the conditionality can be implemented with blending, but since this needs to be bit-granular blend, it cannot be performed using VPBLENDVB.

In total, here is how I don't transpose a 16x16 matrix with AVX2, hopefully there is a better way:

__m256i nottranspose16x16(__m256i x)

{

// exchange off-diagonal quadrants

x = _mm256_shuffle_epi8(x, _mm256_setr_epi8(

0, 2, 4, 6, 8, 10, 12, 14, 1, 3, 5, 7, 9, 11, 13, 15,

0, 2, 4, 6, 8, 10, 12, 14, 1, 3, 5, 7, 9, 11, 13, 15));

x = _mm256_permute4x64_epi64(x, _MM_SHUFFLE(3, 1, 2, 0));

x = _mm256_shuffle_epi8(x, _mm256_setr_epi8(

0, 8, 1, 9, 2, 10, 3, 11, 4, 12, 5, 13, 6, 14, 7, 15,

0, 8, 1, 9, 2, 10, 3, 11, 4, 12, 5, 13, 6, 14, 7, 15));

// rotate every row by its y coordinate

__m256i shifts = _mm256_setr_epi16(

1 << 0, 1 << 1, 1 << 2, 1 << 3,

1 << 4, 1 << 5, 1 << 6, 1 << 7,

1 << 8, 1 << 9, 1 << 10, 1 << 11,

1 << 12, 1 << 13, 1 << 14, 1 << 15);

__m256i sll = _mm256_mullo_epi16(x, shifts);

__m256i srl = _mm256_mulhi_epu16(x, shifts);

x = _mm256_or_si256(sll, srl);

// within each quadrant independently,

// rotate every column by its x coordinate

__m256i x0, x1, m;

// rotate by 4

m = _mm256_set1_epi8(0x0F);

x0 = _mm256_and_si256(x, m);

x1 = _mm256_andnot_si256(m, _mm256_alignr_epi8(x, x, 8));

x = _mm256_or_si256(x0, x1);

// rotate by 2

m = _mm256_set1_epi8(0x33);

x0 = _mm256_and_si256(x, m);

x1 = _mm256_andnot_si256(m, _mm256_alignr_epi8(x, x, 4));

x = _mm256_or_si256(x0, x1);

// rotate by 1

m = _mm256_set1_epi8(0x55);

x0 = _mm256_and_si256(x, m);

x1 = _mm256_andnot_si256(m, _mm256_alignr_epi8(x, x, 2));

x = _mm256_or_si256(x0, x1);

return x;

}

Using that not-transpose to invert a 16-element permutation takes some extra steps that, without AVX512, are about as annoying as not-transposing the matrix was.

- Constructing the matrix is more difficult. AVX2 has shift-by-variable, but not for 16-bit element.[5] There are various work-arounds, such as using DWORDs and then narrowing, of course (boring). Another (funnier) option is to duplicate every byte, add 0xF878 to every word, then use VPSHUFB in lookup-table-mode to index into a table of powers of two. Having added 0x78 to every low byte of every word, that byte will mapped to zero if it was 8 or higher, or otherwise two to the power of that byte. The high byte, having 0xF8 added to it, will be mapped to 0 if it was below 8, or otherwise to two to the power of that byte minus 8. As wild as that sounds, it is pretty fast, costing only 5 cheap instructions (whereas widening to DWORDs, shifting, and narrowing, would be worse than it sounds). Perhaps there is a better way.

- Converting masks back into indices is more difficult due to the lack of trailing zero count, leading zero count, or even popcount. What AVX2 does have, is .. VPSHUFB again. We can multiply by an order-4 de Bruijn sequence and use VPSHUFB to map the results to the indices of the set bits.

- Then we have indices, but since the rows and columns were somewhat arbitrarily permuted, they must still be mapped back into something that makes sense. Fortunately that's no big deal, a modular subtraction (or addition, same thing really) cancels out the row-rotations, and yet another VPSHUFB cancels out the strange order that the rows are in. Fun detail: the constants that are subtracted and the permutation are both 0, 7, 6, 5, 4, 3, 2, 1, 8, 15, 14, 13, 12, 11, 10, 9.

All put together:

void invert_permutation_avx2(uint8_t *p, uint8_t *inv)

{

__m256i v = _mm256_cvtepu8_epi16(_mm_loadu_si128((__m128i*)p));

// indexes to masks

v = _mm256_or_si256(v, _mm256_slli_epi64(v, 8));

v = _mm256_add_epi8(v, _mm256_set1_epi16(0xF878));

__m256i m = _mm256_shuffle_epi8(_mm256_setr_epi8(

1, 2, 4, 8, 16, 32, 64, 128,

1, 2, 4, 8, 16, 32, 64, 128,

1, 2, 4, 8, 16, 32, 64, 128,

1, 2, 4, 8, 16, 32, 64, 128), v);

// ???

m = nottranspose16x16(m);

// masks to indexes

__m256i deBruijn = _mm256_and_si256(_mm256_mulhi_epu16(m, _mm256_set1_epi16(0x9AF0)), _mm256_set1_epi16(0x000F));

__m128i i = _mm_packs_epi16(_mm256_castsi256_si128(deBruijn), _mm256_extracti128_si256(deBruijn, 1));

i = _mm_shuffle_epi8(_mm_setr_epi8(

0, 1, 2, 5, 3, 9, 6, 11, 15, 4, 8, 10, 14, 7, 13, 12), i);

// un-mess-up the indexes

i = _mm_sub_epi8(i, _mm_setr_epi8(0, 7, 6, 5, 4, 3, 2, 1, 8, 15, 14, 13, 12, 11, 10, 9));

i = _mm_and_si128(i, _mm_set1_epi8(0x0F));

i = _mm_shuffle_epi8(i, _mm_setr_epi8(0, 7, 6, 5, 4, 3, 2, 1, 8, 15, 14, 13, 12, 11, 10, 9));

_mm_storeu_si128((__m128i*)inv, i);

}

To make a histogram, emulate VPOPCNTW using, you guessed it, PSHUFB.

The end

This post is, I think, one of the many examples of how AVX512 can be an enormous improvement compared to AVX2 even when not using 512-bit vectors. Every step of every problem had a simple solution in AVX512 (even if it was not always easy to find it). With AVX2, everything felt "only barely possible".

"As complicated as it is, is this actually faster than scalar code?" Yes actually, but feel free to benchmark it yourself. The AVX2 version being somewhat more efficient than scalar code is not really the point of this post anyway. The AVX512 version is nice and efficient, I'm showing an AVX2 version mostly to show how hard it is to create it.[6]

Transposing larger matrices with AVX512 can be done by first doing some quadrant-swapping (also used at the start of the not-transpose) until the bits that need to end up together in one 512-bit block are all in there, and then a VPERMB, VGF2P8AFFINEQB, VPERMB sequence with the right constants (which can be found using the techniques that I described) can put the bits in their final positions. But well, I already did that, so there you go.

A proper transpose can be done in AVX2 of course, for example using 4 rounds of quadrant-swapping. Implementations of that already exist so I thought that would be boring to talk about, but there is an interesting aspect to that technique that is often not mentioned: every round of quadrant-swapping can be seen as exchanging two bits of the indices. Swapping the big 8x8 quadrants swaps bits 3 and 7 of the indices, transposing the 2x2 submatrices swaps bits 0 and 4 of the indices. From that point of view, it's easy to see that the order in which the four steps are performed does not matter - no matter the order, the lower nibble of the index is swapped with the higher nibble of the index.

[1] While MiniSAT (which this program uses as its SAT solver) is a "deterministic solver" in the sense of definitely finding a satifying valuation if there is one, it is not deterministic in the sense of guaranteeing that the same satisfying valuation is found every time the solver is run on the same input.

[2] Not the unique answer, there are multiple solutions.

[3] But not 512-bit vectors.

[4] Nice! It's not common to see a 256-bit VPALIGNR being useful, due to it not being the natural widening of 128-bit PALIGNR, but acting more like two PALIGNRs side-by-side (with the same shifting distance).

[5] Intel, why do you keep doing this.

[6] Also as an excuse to use PSHUFB for everything.